Intel Announces Advancements In Hardware, Cloud And Connectivity, Collaborates With Facebook

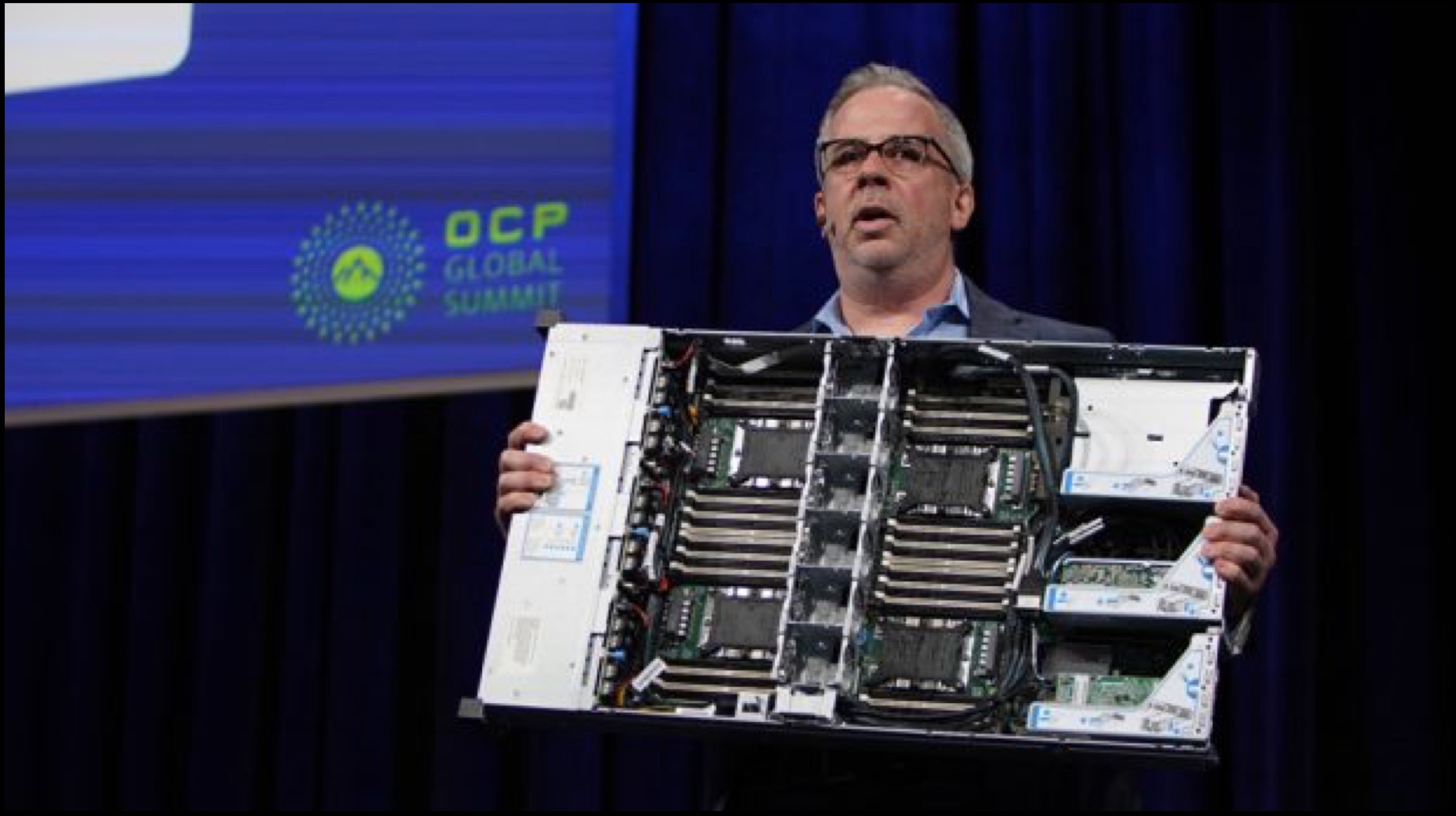

Researchers predict that Artificial Intelligence will make its way to a majority of computer systems by the year 2023. Intel has always been pro-AI, and the company even has a Developer Program to encourage programmers to develop AI systems based on Intel’s Xeon Chips. At the Open Compute Project (OCP) Global Summit, the company unveiled its advancements in new hardware, which will improve computing capabilities and cost innovation for hardware developers.

The company stated that there is a huge amount of untapped data and that the company will design hardware that enables its systems to process the generated data in a way that efficiency is maintained. Moreover, Intel believes that new systems are also required to manage the ever-changing workloads and deployment models that drive data-based infrastructure. Consequently, OCP and Intel are working together for the same. Intel claims that it’s expertise in the field of AI and engineering will enable it to produce products and technologies that will lay the foundation for innovation in the aforementioned field.

The company stated that there is a huge amount of untapped data and that the company will design hardware that enables its systems to process the generated data in a way that efficiency is maintained. Moreover, Intel believes that new systems are also required to manage the ever-changing workloads and deployment models that drive data-based infrastructure. Consequently, OCP and Intel are working together for the same. Intel claims that it’s expertise in the field of AI and engineering will enable it to produce products and technologies that will lay the foundation for innovation in the aforementioned field.

As per the event, Intel’s new cloud-optimized setup for the Xeon processors will have a 4-socket reference design. This will enable the processor to get its core count up to 112 in a singular 2U platform and greatly boost memory bandwidth. Companies like Dell, HP, Lenovo and Quanta will probably deliver new cloud-optimized Xeon processors based on the preceding reference design. They might make their way into markets by the end of the year 2019.

As per the event, Intel’s new cloud-optimized setup for the Xeon processors will have a 4-socket reference design. This will enable the processor to get its core count up to 112 in a singular 2U platform and greatly boost memory bandwidth. Companies like Dell, HP, Lenovo and Quanta will probably deliver new cloud-optimized Xeon processors based on the preceding reference design. They might make their way into markets by the end of the year 2019.

Intel Collaborates With Facebook For Upcoming Processors

Intel has announced that it will team up with online social media and networking giant, Facebook to work on Intel’s upcoming Cooper Lake 14nm Intel Xeon processor family, which will sport Bfloat 16. Bfloat 16 is a 16-bit floating representation which will boost the performance of deep learning systems by offering a similar dynamic range to the basic 32-bit representations. Doing so will greatly improve the speed of AI deep learning, which in turn will help develop new systems such as recommendation engines, speech recognition and machine translation.

Intel has announced that it will team up with online social media and networking giant, Facebook to work on Intel’s upcoming Cooper Lake 14nm Intel Xeon processor family, which will sport Bfloat 16. Bfloat 16 is a 16-bit floating representation which will boost the performance of deep learning systems by offering a similar dynamic range to the basic 32-bit representations. Doing so will greatly improve the speed of AI deep learning, which in turn will help develop new systems such as recommendation engines, speech recognition and machine translation.

Also Read: Best Wireless Headphones Under Rs 3,000 In India For April 2019

Also Read: Best Wireless Headphones Under Rs 3,000 In India For April 2019

Furthermore, the American multinational technology company also announced the release of new OCP-complaint network interface controllers, which carry the OCPv3.0 branding. The controllers range from speeds of 1 GbE (Gigabit Ethernet) to 100 GbE. Also, Intel will now contribute its new Rack Management Module Code to the OCP community to increase its engagement in OCP systems.